|

hi, i'm chaeyun.currently a second-year PhD student at KAIST's Graduate School of AI, advised by Juho Lee. my research is about making language models more honest about what they don't know. as LLMs get used in high-stakes decisions, the gap between how confident a model sounds and how correct it actually is becomes a real problem. i work on uncertainty calibration — methods that close that gap, so you can actually trust a model's outputs. before this, i completed my MS in AI at KAIST and my BS in Statistics and Computer Science at SKKU. outside of research, i've been lifting weights for about six months now and can't seem to stop, and i'm also recently obsessed with hiking.

Email: jcy9911[at]kaist.ac.kr GitHub / Google Scholar / LinkedIn / X |

Industry Experience

|

Publications |

|

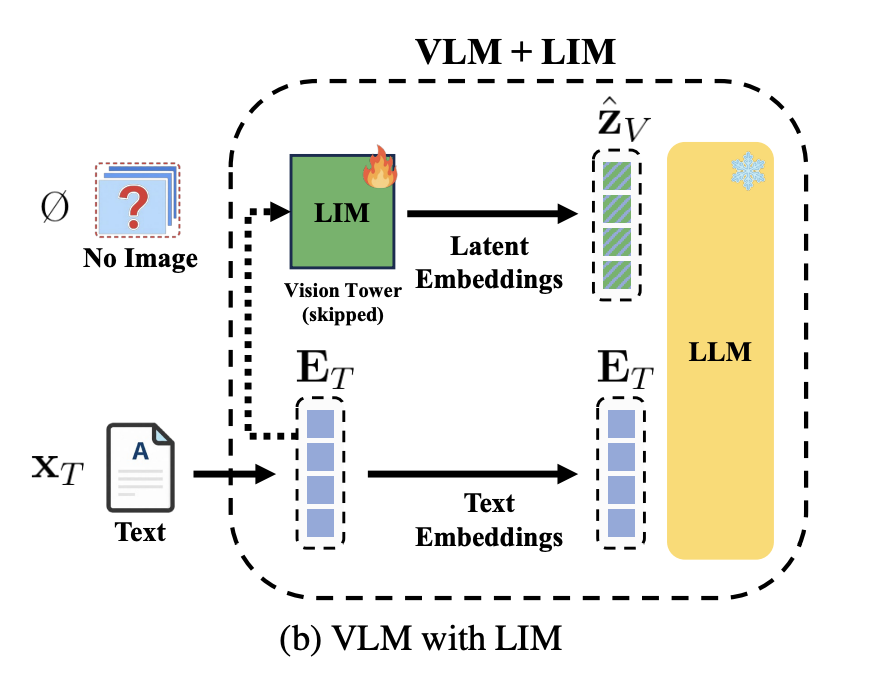

Bridging the Missing-Modality Gap: Improving Text-Only Calibration of Vision Language ModelsMingyeong Kim, Jungwon Choi, Chaeyun Jang, Juho Lee ICLR Trustworthy AI Workshop, 2026 |

|

Reliable Decision‑Making via Calibration‑Oriented Retrieval‑Augmented GenerationChaeyun Jang, Deukhwan Cho, Seanie Lee, Hyungi Lee, Juho Lee NeurIPS, 2025 arxiv / code / |

|

Verbalized Confidence Triggers Self-Verification: Emergent Behavior Without Explicit Reasoning SupervisionChaeyun Jang, Moonseok Choi, Yegon Kim, Hyungi Lee, Juho Lee ICML R2-FM Workshop, 2025 arxiv / |

|

Dimension Agnostic Neural ProcessesHyungi Lee, Chaeyun Jang, Dongbok Lee, Juho Lee ICLR, 2025 arxiv / |

|

Model Fusion through Bayesian Optimization in Language Model Fine-TuningChaeyun Jang, Hyungi Lee, Jungtaek Kim, Juho Lee NeurIPS 2024 Spotlight (top 2.1%, 327/15671), 2024 arxiv / code / |

Invited Talks

|

Teaching Assistant

|

Conference Reviewer

|

Click here to see the papers I am currently reading.

Click here to see the papers I am currently reading.